Blog

SAMA CTI Principles: How RST Cloud Facilitates Compliance

RST Cloud can help to facilitate the compliance with SAMA CTI Principals to help organizations bolster their security posture.

January 25, 2024

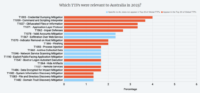

Top 20 TTPs in 2023: Australia compared to the World

This retrospective analysis of the tactics, techniques, and procedures (TTPs) employed by cybercriminals is conducted to assist cybersecurity professionals in planning how to fortify their defence strategies. The analysis sheds light on the top 20 TTPs specific to Australia, derived from RST Cloud’s thorough examination of cyber threats in 2023 based on RST Report Hub…

January 22, 2024

Guide to SAMA approach to CTI with RST Cloud

The Saudi Central Bank (Saudi Arabian Monetary Authority – SAMA) recognises the role that CTI plays in enhancing cybersecurity. See how to meet the requirements

October 20, 2023